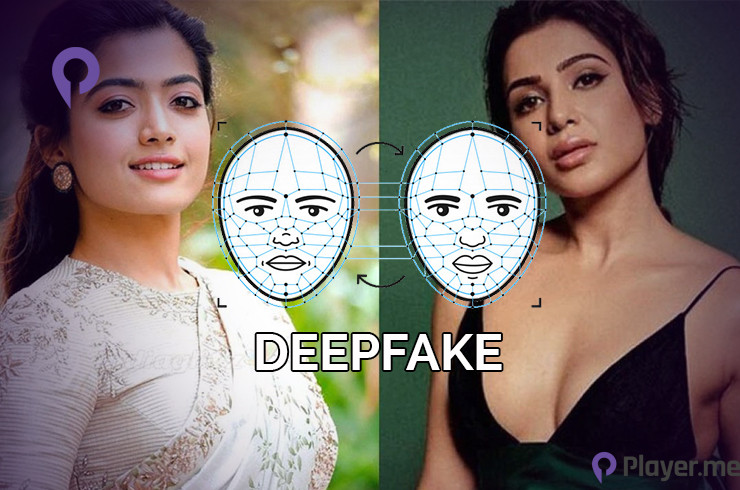

Indian actress Rashmika Mandanna has become the latest victim of Deepfake as an altered video featuring her has gone viral with over 2.4 million views on X. At an initial glance, the video looks nothing out of the ordinary as Rashmika Mandanna enters an elevator. However, after closer inspection, we can see that someone has morphed the video by imposing Rashmika Mandanna’s face on the woman who is wearing an outfit with a plunging neckline and posing for a photo.

A woman named Zara Patel originally shared the video on Instagram on October 8. The identity of who is in charge of the Deepfake video remains a mystery. Unfortunately, this is not an isolated case, as many celebrities, such as Al Gadot and Taylor Swift, fell victim to underhand use of the technology.

Related: Tech Companies Are Under Fire Again for Failure in Combating Child Sex Exploitation

Deepfake Is Not Inherently Bad, How People Used It Makes It Bad

For starters, Deepfake is the norm for Photoshop tools in the 21st Century as it uses AI and machine learning to create new footage depicting events, statements, or actions that never occurred with less skill, time and equipment. While people with subpar skills may create videos that are easy to spot, people with higher proficiency, like marketers, can use this technology to save money on the budgets for their video campaigns because they do not need an in-person actor.

However, to do so, a marketer must purchase the licence for the actor’s identity before utilising the technology, as it would affect ethical rules, such as in the case of Rashmika Mandanna. One good example of Deepfake technology is when marketers made a video of the famous artist Salvador Dali to host a museum dedicated to him in Florida. The video placed Deepfake into the mainstream, and many other jobs are now utilising the technology for the greater good.

For example, educators are now using Deepfake for realistically bringing historical figures to life in the classroom instead of the traditional visual and media formats. Using Deepfake to reenact the voices and videos of historical figures, the classroom becomes a more engaging, interactive and effective way of learning for the students.

In addition, influences and marketers are utilising Deepfake in a new global marketing trend to broaden their reach and expand their audience. For instance, with the consent and agreement of both parties, many tech giants, such as Meta, are developing personal AI with the personality of celebrities to amplify their audience and create deeper engagement with fans.

Rashmika Mandanna and Many Other Celebrities Cases Highlights The Downside of Deepfake

However, despite all the good things we spoke of Deepfake, the harm exceeds the benefits tenfold. In the case of Rashmika Mandanna, the unauthorised use of her identity brings back the topic of lack of trust and ethics issues regarding Deepfake.

As mentioned above, Deepfake creates new footage depicting events, statements, or actions that never occurred, and with better proficiency, the harder it is to ascertain the authenticity of the video. For example, many people’s reaction towards the Rashmika Mandanna video was that the depicted is indeed her. However, after fellow actor Amitabh Bachchan tweeted in reply to the video, stating that Deepfakes are a strong case for legal action, many replayed the video and realised it was not her.

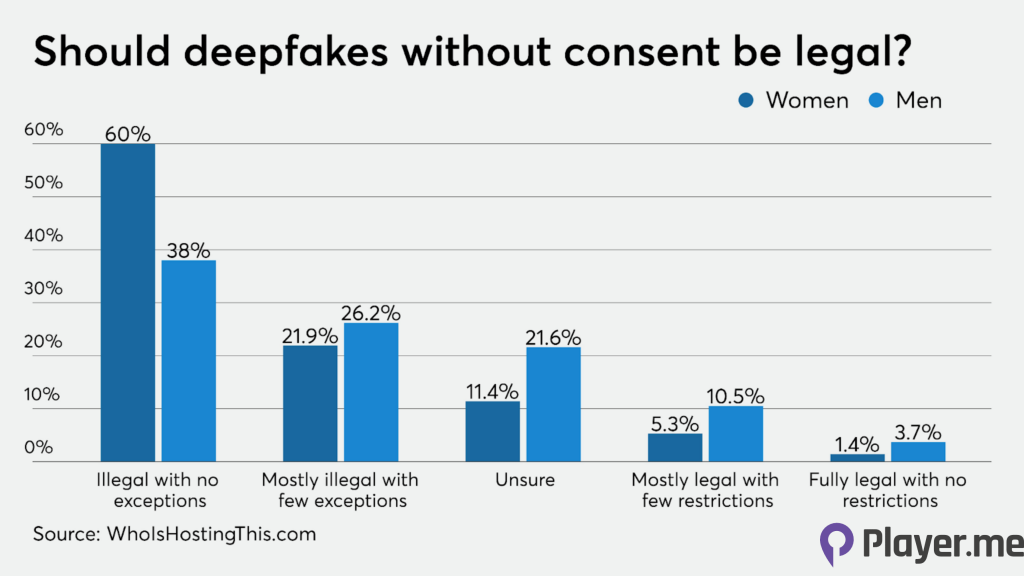

Therefore, we can conclude that it would be near impossible for anyone who did not know the person personally and closely to realise the difference between real and fake. While some notable videos of popular figures like former US president Barack Obama calling Donald Trump a complete dipshit is much easier to spot, it does not excuse the fact people are illegally using Deepfake maliciously.

Most notably, Deepfake technology is used for unpleasant edition usage, like pornographic video content in nature, with famed female celebrities such as Taylor Swift and Arianna Grande suffering as victims. Additionally, the technology makes it much simpler for scams as scammers can freely create false accusations or complaints against companies by impersonating a higher-up of another company. For instance, a German energy firm U.K. subsidiary handed around £220,000 to a Hungarian bank account after a swindler used the power of deepfakes to synthesise the CEO’s voice.

Actor Rashmika Mandanna Reacts Strongly Against the Use of Deepfake Technology

As the latest victim of Deepfake, Rashmika Mandanna reacted strongly against the morphed video and demanded lawful action against the perpetrator. Amidst the public support, Rashmika Mandanna took to X and Instagram to express her thoughts on the matter.

“I feel really hurt to share this and have to talk about the Deepfake video of me being spread online. Something like this is honestly, extremely scary not only for me but also for each one of us who today is vulnerable to so much harm because of how technology is being misused.”

She further stated that if she was not an actor, she would be out of sorts in dealing with something like this. “Today, as a woman and as an actor, I am thankful for my family, friends and well-wishers who are my protection and support system. But if this happened to me when I was in school or college, I genuinely cannot imagine how could I ever tackle this. We need to address this as a community and with urgency before more of us are affected by such identity theft.”

What Are Countries Doing to Solve the Grey Area of Deepfake?

To prevent cases such as Rashmika Mandanna and non consensual Deepfake porn from occurring again, The EU has taken a proactive approach to managing Deepfakes, calling for increased research into detecting and preventing Deepfakes, as well as regulations requiring clear labelling of artificially generated content. Some notable policies imposed are:

- The AI Regulatory Framework

- The General Data Protection Regulation

- Copyright Regime

- e-Commerce Directive

- Digital Services Act

- Audio Visual Media Directive

- Code of Practice on Disinformation

- Action Plan On Disinformation

- Democracy Action Plan

However, despite the updating policies and further enhancements, the threat of Deepfakes is still increasing. For instance, Reuters highlighted that in 2023 alone, there are about 500,000 video and voice Deepfakes circulating on social media. Therefore, are the measures taken really effective? Bookmark our Facebook and Instagram pages for future updated articles relating to this topic.