Google has once again seized the spotlight with the introduction of its groundbreaking suite of generative AI services – Google Gemini. This innovative platform could disrupt the way businesses approach AI, offering a family of multimodal AI models tailored for a myriad of applications.

Google Officially Announced the Arrival of Gemini

As of late, the AI community has been buzzing with activity, and Google has emerged as a frontrunner in the race for supremacy in the AI realm. The company’s deep commitment to advancing AI capabilities is evident in its recent endeavours, including the monumental PaLM 2 update and the introduction of Google Bard. Seizing the opportunity amid the turbulence at OpenAI, Google unveiled Gemini, a generative AI designed to take on multifaceted challenges.

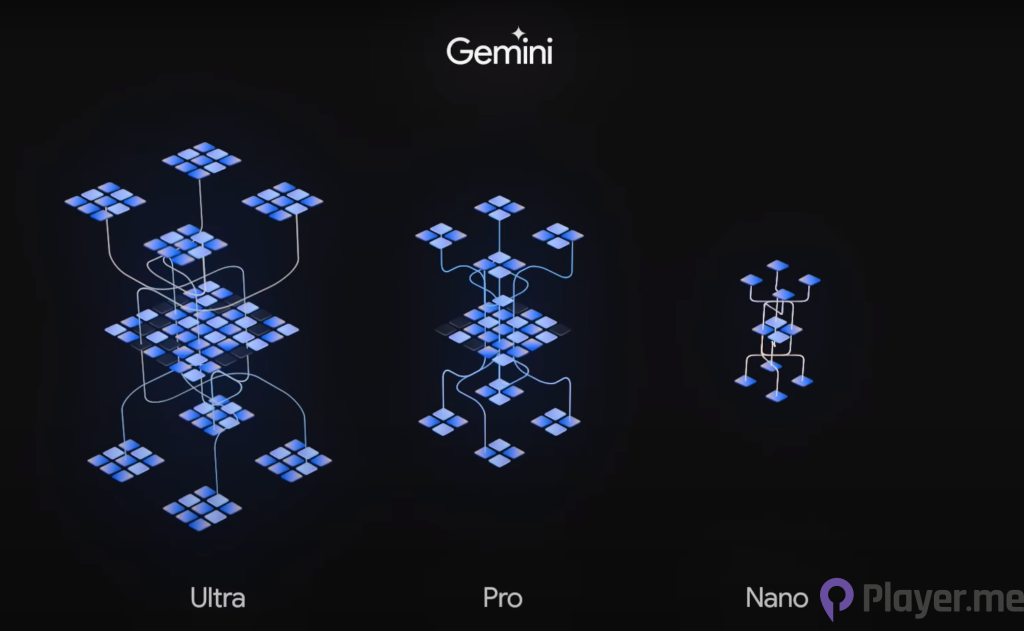

Understanding Google Gemini’s Three Tiers

1. Gemini Ultra: The Powerhouse of Multimodal AI

Gemini Ultra stands at the zenith of Google’s AI prowess, representing the most powerful and capable model in the multimodal family. Trained as a multimodal AI from its inception, it has demonstrated unparalleled performance, surpassing human language experts on the Massive Multitask Language Understanding (MMLU) test. With a remarkable score of 90.0% on the MMLU test, this AI model outshines competitors in 30 out of 32 academic benchmarks, including Alibaba’s open-source AI model.

One of its remarkable capabilities lies in its proficiency in understanding and generating high-quality code in various programming languages such as Python, Java, C++, and Go. This powerhouse excels in coding benchmarks, including HumanEval and Natural2Code, underscoring its versatility across different domains.

Despite its unparalleled capabilities, it is still undergoing fine-tuning, with plans to release it for a new version of Google Bard, known as Bard Advanced, in 2024.

2. Gemini Pro: The Scalable Workhorse

Gemini Pro, positioned as the most scalable and all-purpose model, serves as the driving force behind Google Bard. This tier, described by Google as the “Lite” version, brings advanced reasoning, planning, and understanding to the forefront. In a competitive field where benchmarks matter, it outperformed GPT-3.5 in six out of eight benchmarks, showcasing its prowess in various language tasks.

As Google integrates this multimodal AI model into Bard, users can expect a more advanced and nuanced chatbot experience. Google emphasises that this integration is the most significant upgrade to Bard since its inception. Available in English across more than 170 countries and territories, it is poised to make waves in scaling AI capabilities for diverse tasks.

3. Gemini Nano: The Efficient On-Device Model

Completing the trio is Gemini Nano, the most efficient model designed for on-device tasks. Launching initially on the Google Pixel 8 Pro with its December Feature Drop, the AI model allows for on-device processing, paving the way for enhanced user experiences. This mobile-friendly version of the large language model brings the power of AI to Android phones, promising efficiency and adaptability.

As this AI model finds its way into Pixel 8 Pro devices, users can explore features like “Summarise in Recorder” and “Smart Reply” in Gboard, starting with popular messaging apps like WhatsApp. With on-device processing, it provides a glimpse into the future of AI seamlessly integrated into everyday mobile interactions.

Gemini’s Multimodal Marvel: Beyond Chatbots

While some may categorise Gemini as an elaborate chatbot, it transcends the boundaries of conventional language models. Technically classified as a Large Language Model (LLM), it sets itself apart by being trained as a multimodal AI from the outset. Unlike traditional LLMs that specialise in specific tasks, such as text or image processing, this multimodal AI model boasts the ability to handle a spectrum of content types – speech, text, reasoning problems, code, images, video, audio, and more.

This multimodal prowess positions this AI model as a polymath or Renaissance Man in the LLM world. The capacity to comprehend and generate diverse content types equips Gemini with a unique advantage in understanding context and interpreting information accurately across various subject matters.

Read More: Google Bard Enhances YouTube Content Interpretation in New Google Update

Gemini Applications: A Glimpse into the Future

Gemini’s capabilities extend far beyond mere chatbot interactions. Businesses can harness the power of this trained AI to customise solutions tailored to their specific needs. The possibilities are vast, ranging from recognising counterfeit products to imitating a helpful customer service representative or even explaining complex physics problems to students.

Google envisions their multimodal AI model being utilised for tasks such as processing raw audio to identify specific signals, analysing user intent to create customisable kits, and aiding scientists in discovering links in published research. The model’s potential extends to winning competitive programming contests, showcasing its adaptability and utility in diverse scenarios.

Gemini vs. Google Bard: A Symbiotic Relationship

Google Bard was an early attempt at consumer-facing AI. With the advent of this multimodal AI model, Google has elevated Bard’s capabilities by incorporating Gemini Pro technology. While Bard may be considered a more limited tool compared to this unique AI model, the integration of this particular AI model brings advanced reasoning, planning, and understanding to the forefront of Bard’s capabilities.

The relationship between this multimodal AI model and Google Bard showcases the seamless integration of evolving AI technologies. Google’s commitment to enhancing consumer-facing AI experiences is evident in the continuous refinement of its models.

The Complex Interplay with PaLM 2

Amidst the unveiling of this unique AI model, the role of Google’s PaLM 2 model deserves attention. PaLM 2, a language-focused LLM model introduced in 2023, excels in language tasks such as translation. While both Gemini and PaLM 2 are products of Google DeepMind, they serve different purposes. PaLM 2 focuses on language-centric tasks, while this AI model, with its multimodal capabilities, extends its reach to diverse content types.

The interplay between PaLM 2 and Gemini remains somewhat enigmatic. While it is evident that both projects fall under the umbrella of Google DeepMind, the intricate connections and collaborations between the two models remain undisclosed. As Google continues to refine its AI offerings, the synergy between PaLM 2 and Gemini may unfold further.

Navigating Gemini’s Future: Availability and Pricing

For developers eager to explore the capabilities of this multimodal AI model, access is available through Google AI Studio or Google Cloud Vertex AI. Gemini Pro, the first tier released, is accessible starting December 13, 2023, with Gemini Ultra and Nano to follow in subsequent releases.

While specific pricing details for the multimodal AI model remain elusive, businesses are encouraged to explore Google Vertex and its pricing structure for generative AI services. The variability in pricing is contingent on the type of content and specific AI service a business intends to utilise.

Google emphasises its commitment to deploying AI model responsibly, with a focus on AI safety. While details on safety measures remain vague, the assurance that this multimodal AI model was trained with safety in mind implies a dedication to ethical AI deployment.

The Unanswered Questions: Ethical Considerations

While Google emphasises the safety of Gemini, ethical considerations surrounding content consumption, proprietary work, and potential societal impacts linger. The extent to which this one-of-a-kind AI model interacts with user-generated content, proprietary information, and conversations remains a topic of concern. Questions about job displacement, unethical monetisation, and exploitation of vulnerable groups persist, echoing broader ethical concerns associated with large language models.

Looking Ahead: Gemini’s Integration into Google Services

Google’s trajectory in AI development is marked by a commitment to refinement and continuous improvement. Gemini, with its multifaceted capabilities, is poised to become an integral component across various Google services. As Google experiments with their multimodal AI model in Search to enhance user experience, future integration into products like Ads, Chrome, and Duet AI is on the horizon.

The incorporation of this multimodal AI model into the fabric of Google’s offerings signifies the company’s ambition to position itself as a leading source for professional AI development. The competitive field, marked by the likes of OpenAI, sees Google’s AI model as a formidable contender, equipped with the potential to adapt to a myriad of applications.