This January, Google are once again on the fast play of new updates on their development of AI. Besides the revealing of new generative AI features on Google Cloud and Google Chrome, Google continued to push the boundaries with another latest innovation, Google Lumiere.

Since the debut of DALL-E 2 towards the close of 2022, the landscape of text-to-image generators has witnessed a surge in interest, marked by the emergence of notable competitors. Fast forward over a year, and a new era in technology is emerging — AI video generation.

This groundbreaking development of Google Lumiere signifies a significant leap forward in the field of AI-generated videos, bringing them closer to the realm of realism. Let’s delve into the details of how Google Lumiere is transforming the landscape of AI video with its pursuit of heightened realism.

About Google Lumiere

Last Tuesday, January 23rd, Google Research unveiled Google Lumiere — a text-to-video diffusion model that can craft exceptionally lifelike videos based on text prompts and other image inputs, as detailed in a recently published research paper.

Google Lumiere Explained

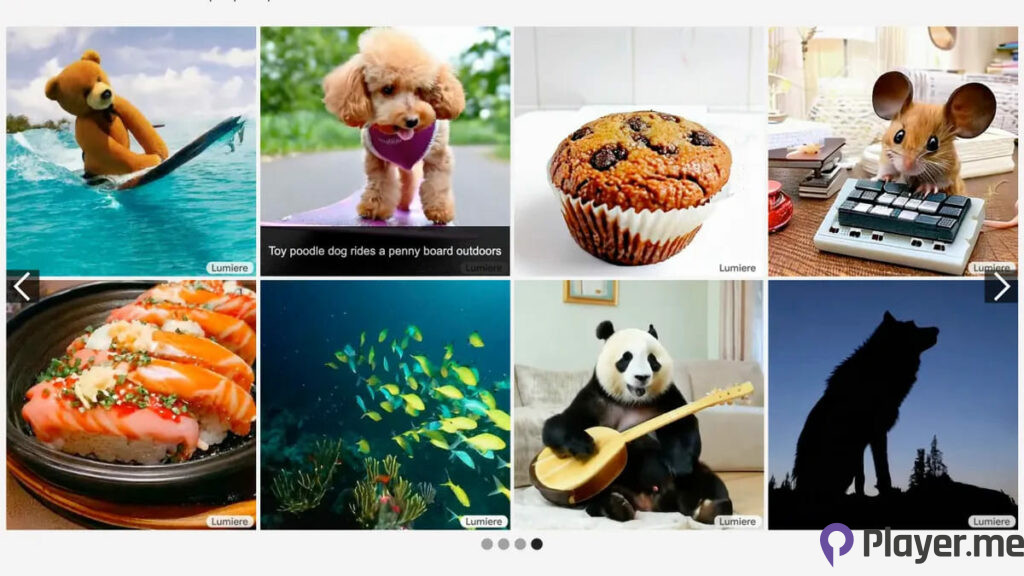

The Lumiere model addresses a key obstacle in video synthesis, specifically the creation of “Realistic, diverse, and coherent motion”, as outlined in the research paper. Unlike many video generation models that often produce choppy results, Google’s approach aim for a smoother and more cohesive viewing experience, as demonstrated in the accompanying video.

The video clips not only provide a seamless viewing experience but also exhibit a heightened level of realism, representing a notable improvement over other models. Google Lumiere achieves this through its Space-Time U-Net architecture, which enables the generation of the entire temporal duration of a video in a single pass.

This approach to video generation differs from other existing models that synthesise distant keyframes, a method identified in the paper as inherently challenging for maintaining video consistency.

Also Read: Latest Google Pixel 8 Feature Drop: Circle to Search and Additional Additions

What Can Google Lumiere Do?

What can Google Lumiere do? Google Lumiere exhibits versatility in video generation through various inputs, such as text-to-video, functioning similarly to a conventional image generator by creating a video based on a given text prompt. In addition to text-to-video generation, Lumiere enables image-to-video generation, stylised generation for creating videos in a specific style, cinematography that animates a designated portion of a video, and inpainting to mask out areas for colour or pattern alterations.

Moreover, Google Lumiere introduces a creative aspect to video generation with the stylised generation, utilising a single reference image to produce a video in the desired style as prompted by the user.

Beyond video generation, Lumiere offers applications in video editing. It facilitates diverse visual stylisations, enabling the modification of a video to align with specific prompts. Additionally, the model supports cinematography, bringing animation to specific regions of a photo within the video, and inpainting, which addresses missing or damaged areas by seamlessly filling them in.

More on Google Updates: Latest Google Meet Enhancements: More Personalisation with New Filters and Better Lighting

Comparison via Performance

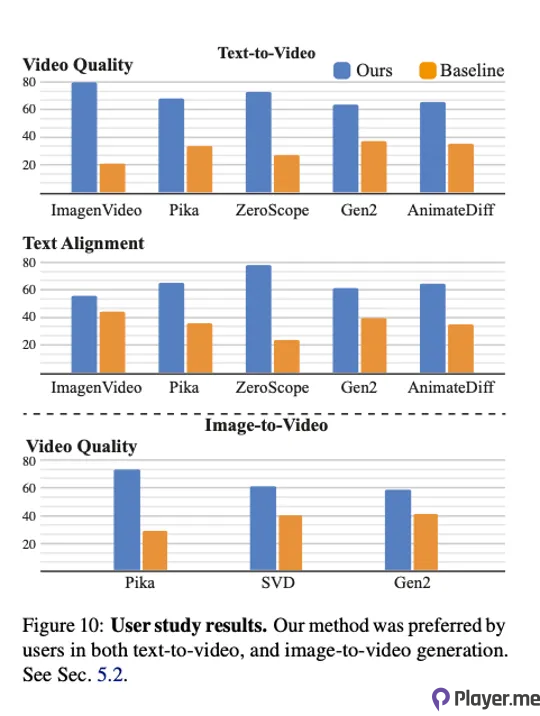

In their study, Google compared Lumiere to well-known text-to-video diffusion models such as ImagenVideo, Pika, ZeroScope, and Gen2. Testers were asked to evaluate visual quality and motion without knowledge of the generating model. Lumiere consistently outperformed its counterparts in various categories, including text-to-video quality, text-to-video text alignment, and image-to-video quality.

In fact, Google’s Lumiere paper acknowledges the potential for misuse, emphasising the importance of developing tools to detect biases and prevent malicious use cases to ensure safe and fair utilisation. However, the paper does not elaborate on the specifics of implementing these measures.

You Might Be Interested: Google Releases AI Multisearch Feature in U.S.

Final Say

Google have gradually entered the text-to-video domain with advanced AI models, shifting towards a more multimodal approach. Besides, the Gemini large language model is set to integrate image generation into Bard in the future. Google Lumiere’s advanced capabilities and cutting-edge technology promise to redefine the way we perceive and experience AI-generated visual content.

While Lumiere is not currently available for testing, its development highlights Google’s capability to create an AI video platform that competes with and arguably surpasses, commonly available AI video generators such as Runway and Pika. This progress reflects Google’s advancements in AI video technology over the past two years.

For more AI-related news, be sure to check out our website.