The buzzworthy phrase “Taylor Swift AI” has captured the online sphere’s attention but to the dismay of Swifties, not for the anticipated positive reasons. Ever wondered about the intricate terrain of explicit AI-generated images causing a stir on X (Formerly Twitter)? What obstacles stand in the way, and how are public sentiments and legal reactions shaping the narrative around this disconcerting incident? Let’s investigate the details to uncover the broader context of this intriguing development.

Unveiling the Taylor Swift AI Phenomenon

The controversy unfolded as sexually explicit AI-generated images of Taylor Swift surfaced on X, showcasing the dark side of artificial intelligence technology. The term “Taylor Swift AI” began trending globally, highlighting the proliferation of manipulated media and the potential harm it can inflict. These AI-generated images, depicting the singer in compromising positions, garnered millions of views and sparked widespread discussions.

Spread and Removal Challenges

One of the most striking aspects of this controversy is the rapid spread of these explicit AI-generated images. Despite X’s policies explicitly prohibiting such content, a post featuring these images gained over 45 million views before it was eventually taken down. The challenge lies not only in preventing the initial spread but also in combating reposts across various accounts and platforms.

Reports suggest that the origin of these images may be traced back to a Telegram group using Microsoft Designer, a tool for creating AI-generated content. The group’s activities underline the evolving landscape of explicit AI content creation and the difficulties platforms face in moderating such content effectively.

The Celeb Jihad Connection and the Deepfake Porn Challenge

Hosting the explicit images on Celeb Jihad, a notorious deepfake porn website, adds another layer to the controversy. Celeb Jihad has a history of being involved in indecent scandals, including the posting of explicit images hacked from celebrities’ phones and iCloud accounts. The challenge posed by such websites lies in their ability to operate seemingly cloaked by proxy IP addresses, outrunning traditional cybercrime investigators.

In the broader context, this incident with Taylor Swift is indicative of the rising trend in deepfake porn. According to an analysis shared in December, over 143,000 new deepfake videos were posted online in the past year alone, surpassing the cumulative total of previous years. Calls for the takedown of such websites and criminal investigations into their owners are mounting, but the challenges persist.

Public Outcry and Advocacy

Swift’s dedicated fan base, known as Swifties, swiftly took to social media to express their outrage over the explicit AI-generated images. Criticising X for what they perceived as a delayed response, fans initiated a counter-campaign by flooding hashtags with positive content, aiming to overshadow the explicit fakes. This public outcry highlights the emotional toll such incidents take on both celebrities and their followers.

Beyond expressing discontent, there is a growing concern about the online proliferation of explicit AI-generated material that disproportionately harms women and children. Families affected by these incidents are pushing for legislative safeguards, urging lawmakers to address the challenges posed by new AI models and websites openly advertising such services.

Legal Responses and Legislative Efforts

As the incident raises questions about the ethical and legal implications of AI-generated explicit content, some states already have laws against the creation and sharing of non-consensual deepfake photography. President Joe Biden signed an executive order in October, addressing the use of generative AI for producing explicit content without consent.

However, crafting effective legislation is not a straightforward task. The complexity of the issue requires careful consideration to avoid overreach and potential conflicts with First Amendment rights. Efforts are underway at both state and federal levels to establish regulations that can provide uniform protections while sending a strong message to perpetrators.

Social Media Platforms and AI Moderation Challenges

The controversy surrounding Taylor Swift AI sheds light on the challenges faced by social media platforms in moderating content effectively. X, in particular, has come under scrutiny for reportedly gutting its content moderation team and relying heavily on automated systems and user reporting. Investigations by the European Union into X‘s content dissemination and disinformation practices are ongoing, adding another layer of complexity.

Meta, formerly Facebook, has also made cuts to teams addressing disinformation and harassment campaigns. These reductions raise concerns, especially in the context of pivotal events such as the 2024 elections in the United States, where misleading AI-generated content could potentially disrupt the democratic process.

Congressional Attention and the Call for Regulations

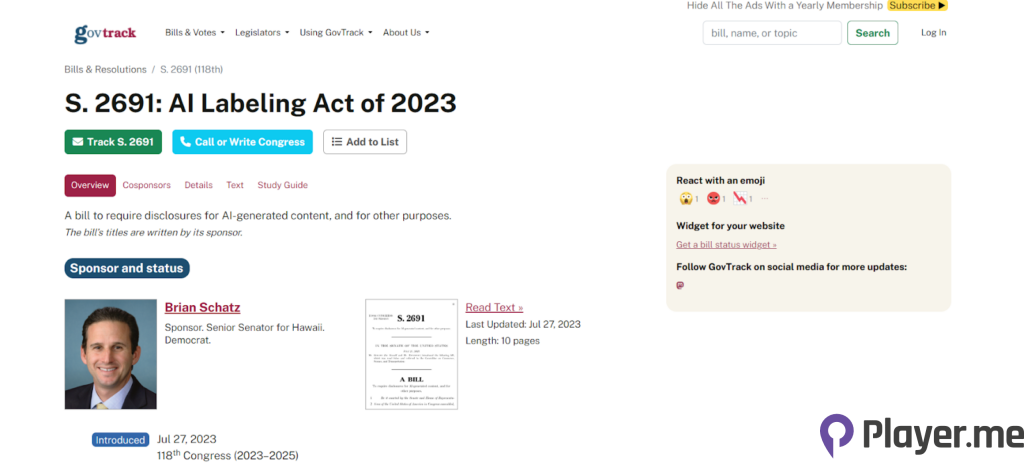

Lawmakers have taken notice of the risks associated with unregulated AI, with some expressing the need for legislative action. Senator Martin Heinrich of New Mexico highlighted the incident as a specific risk arising from unregulated AI and called for Congress to address the issue promptly. Representative Tom Kean of New Jersey has introduced the AI Labelling Act, emphasising the importance of clear indications for AI-generated content.

However, the path to effective regulation is fraught with challenges. Balancing the need for safeguards against AI abuses with the preservation of free speech rights requires careful navigation. The Electronic Frontier Foundation and other civil liberty advocates caution against overreaching legislation that could inadvertently stifle legitimate forms of expression.

The Unresolved Landscape of AI-Generated Content

The Taylor Swift AI controversy illuminates the complex and evolving landscape of AI-generated content, particularly in the realm of explicit material. The challenges faced by social media platforms, the legal system, and advocacy groups underscore the need for a nuanced approach to address the multifaceted issues surrounding AI-generated content.

As technology continues to advance, the battle against non-consensual AI material remains an ongoing struggle. While the incident involving Taylor Swift has brought attention to the issue, we will keep you well-informed with the latest tech and AI related developments wth player.me.